During WWII the US Army (there was no Air Force yet) faced an interesting problem: where should they put armor on bombers in order to reduce casualties? Armor is protective against bullets but it is heavy. Too much and the planes won’t perform well. Too little leaves the planes exposed.

During WWII the US had a group of mathematicians and statisticians who used statistical analysis to help in the war effort. This group of math whizzes was called the Statistical Research Group (SRG) and was housed in a building near Columbia University. This question of where should armor be placed on planes was brought to SRG and assigned to Abraham Wald, an Austrian Jew who fled the Nazis before WWII and taught math and statistics at Yale.

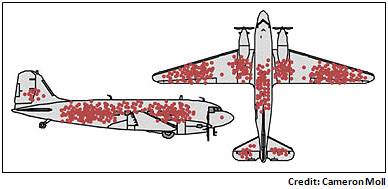

The Army gave Wald useful data — they had recorded the average number of bullet holes per square foot on planes that had come back from bombing missions. The data revealed that there were many bullet holes in the fuselage and wings, but few holes in the engines. The data looked like this:

The Army was planning on adding armor strategically to the parts of the plane that tended to get most riddled with bullets and asked Wald to help them determine exactly where the armor should go.

Wald had a different view. He said the armor shouldn’t go where the bullet holes are. Instead, it should go where there aren’t holes. His insight was to realize that when considering all the planes flying missions, the bullet holes should be evenly distributed — there is no reason why anti-aircraft guns fired from thousands of feet away would hit just the wings and fuselage. It is the planes that didn’t return that were hit in the engines and that’s where the armor should go.

Wald’s insight was to identify a logical fallacy called “survivorship bias.”

Survivorship bias “is the logical error of concentrating on the people or things that made it past some selection process and overlooking those that did not, typically because of their lack of visibility.” Source. Survivorship bias is commonly overlooked and can lead to bad decisions. Keeping in mind the bullet hole story is a powerful mental model for making better decisions. Here are a few examples:

Investing: A study by Vanguard found that only 55% of equity mutual funds were still in existence after 15 years — 45% of them were poor performers and had been closed or merged into other ones. This leads to investment managers being able to tout that most of their funds outperform the market because their poor-performing funds were closed.

Good to Great: The business book Good to Great by Jim Collins is widely considered one of the best business strategy books of all time. In the book, Collins highlights strategies and management techniques followed by some of the world’s most successful companies. A major criticism of the book is that Collins didn’t research whether unsuccessful companies had also adopted those strategies and techniques — he only focused on the successful companies.

Marketing: Our firm’s name is “The St. Louis Trust Company.” We’re concerned that it makes us sound like a regional bank and doesn’t reflect that we’re a top national multi-family office. In deciding whether to change our name we thought about surveying our clients to see their views of our name. But then we realized that doing so would be like armoring where the bullet holes are — our existing clients got past our name and worked with us. What we can’t know are all the potential clients who didn’t make contact with us because our name steered them astray. Side note – we are changing our name to “St. Louis Trust and Family Office” — a tweak that should be clarifying while retaining the equity of our brand.

This graphic is a good one to keep in mind when making decisions based on data — question whether the data only focuses on the survivors:

Great commentary. Consumer companies, especially with small market share, often survey their customers about preferences and change. Research would be better spent asking buyers who choose a competitor’s product what they value; therein lies the opportunity.